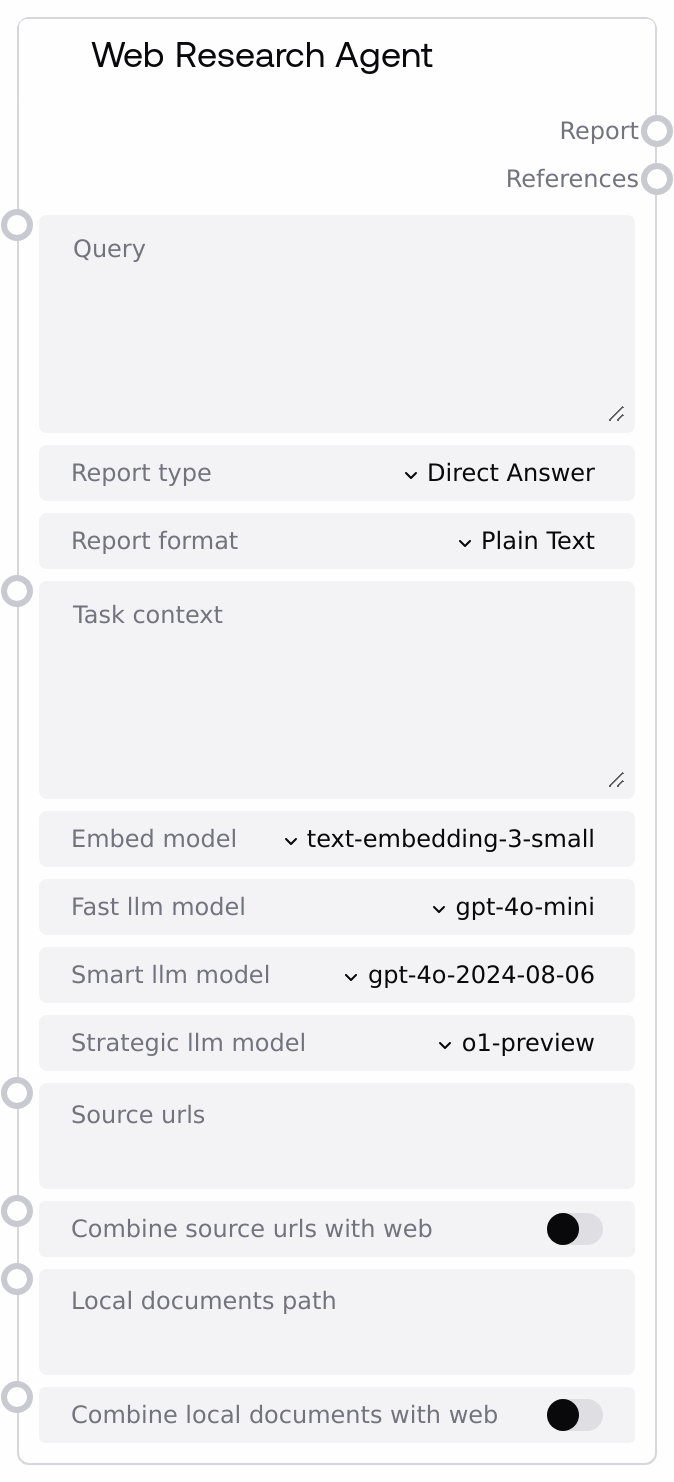

Web Research Agent¶

Runs an automated web (and/or local documents) research workflow and returns a formatted report plus references. It crafts a research query from your input and task context, optionally restricts sources to given URLs or local files, and uses selected LLM/embedding models to generate results in a chosen format.

Usage¶

Use this node when you need structured, citation-backed research on a topic. Provide a clear query, select the desired report type and output format, and optionally constrain sources (URLs or local documents). Integrate it into workflows that require research summaries, comparative analyses, or data-oriented outputs (CSV/JSON) for downstream processing.

Inputs¶

| Field | Required | Type | Description | Example |

|---|---|---|---|---|

| query | True | STRING | The main research question or topic to investigate. Can be multi-line. | Assess the market outlook for residential solar in the EU over the next 5 years. |

| report_type | True | CHOICE | Specifies the style of output. Options: Direct Answer, Concise Summary, Detailed Report, Comparative Analysis, News Article, How-To Guide, Creative Writing. | Detailed Report |

| report_format | True | CHOICE | Specifies the output format. Options: Plain Text, Markdown, CSV, JSON, HTML, LaTeX. | Markdown |

| task_context | True | STRING | Additional context to guide the research (e.g., form inputs, target schema, constraints). Leave empty if not needed. | Audience: non-technical executives. Focus on installation cost trends and policy incentives. Include a short risks section. |

| embed_model | True | CHOICE | Embedding model used to gather and rank relevant context. | text-embedding-3-small |

| fast_llm_model | True | CHOICE | LLM for fast operations like brief summaries or lightweight steps. | gpt-4o-mini |

| smart_llm_model | True | CHOICE | LLM for more complex reasoning and full report generation. | gpt-4o-2024-08-06 |

| strategic_llm_model | True | CHOICE | LLM for strategic planning tasks (research plans and strategies) within the workflow. | o1-preview |

| source_urls | True | STRING | Optional comma-separated list of URLs to restrict the research sources. No page traversal beyond provided URLs. Leave empty to use general web search. | https://www.iea.org/reports/solar-pv, https://ec.europa.eu/energy_topics |

| combine_source_urls_with_web | True | BOOLEAN | If true, combines general web search with the provided source URLs. If false, research will be limited to the specified URLs when provided. | true |

| local_documents_path | True | STRING | Optional filesystem path to a folder of local documents to include in the research. If set, the node will incorporate these documents into the evidence. | /data/research/solar_policy_docs |

| combine_local_documents_with_web | True | BOOLEAN | If true, includes default web search in addition to local documents. If false and only local docs are provided, research emphasizes local materials. | false |

Outputs¶

| Field | Type | Description | Example |

|---|---|---|---|

| report | STRING | The generated research report in the selected report format and style. | ## EU Residential Solar Outlook (2025–2030) ... (Markdown content) |

| references | STRING | Reference list or citations used to generate the report. Typically includes URLs and/or document identifiers. | - https://www.iea.org/... - https://ec.europa.eu/... |

Important Notes¶

- Report formatting is strict: The output is crafted to match the selected report_format (e.g., CSV/JSON/HTML). Downstream steps should expect that exact format.

- Source control: Providing source_urls limits research to those URLs unless you set combine_source_urls_with_web to true.

- Local documents: Setting local_documents_path includes local files; ensure the path is accessible to the backend environment executing the research.

- Context injection: task_context is appended to the research prompt; include schemas for CSV/JSON outputs to get structured data.

- Model selection: Different LLMs are used for fast, smart, and strategic steps; choose models appropriate for your cost/quality needs.

- Timeouts: Requests generally time out after several minutes; very broad or complex queries may require narrowing the scope.

- Usage limits: Access to this node may be subject to organizational limits for web research usage.

Troubleshooting¶

- Service error or timeout: If you get an error or no response, try simplifying the query, reducing scope, or verifying connectivity. Consider using fewer or more specific sources.

- Empty or low-quality references: Provide source_urls to enforce credible sources, or add task_context specifying citation requirements.

- Wrong output format: If the report content doesn’t conform (e.g., invalid CSV/JSON), clarify the desired schema in task_context and retry.

- Local documents not found: Verify local_documents_path exists and is reachable by the backend. Use absolute paths and correct permissions.

- Model not available: If a selected model fails, switch to another supported model or defaults.

- Results too generic: Add detailed task_context (audience, required sections, metrics, comparison criteria) and select a more capable smart_llm_model.